Suppose there is a smart computer in your cell phone. It responds instantly, knows your language, and is completely functional even without the internet. This AI will keep your information confidential on your device. It does not need any additional charge per question. Such is the future that Sarvam Edge is creating in India.

Sarvam Edge is a form of AI that takes the form of power to our devices and alters our relationship with technology as we know it. This guide will demonstrate to you what Sarvam Edge is and what it is capable of. You can begin building today by using a simple hands-on guide.

Also read: New Update Makes GPT-5.3 Instant More Useful For Everyday Tasks

Table of contents

Why On-Device AI is a Game-Changer

Sarvam Edge addresses the key issues of cloud-based AI. It transfers the smartness to the handheld gadget directly from remote servers. This enables a better user experience.

Here is why this matters:

- Instant Response (Low Latency): The AI is deployed on your device. There is no delay. This is essential to the seamless voice assistants and live translators.

- Full Privacy: The entire processing is done on the local side. Your data does not leave your device, and neither does your voice. This ensures total privacy.

- Anywhere, Anytime: Sarvam Edge does not require the internet. Where there are poor connections, it is reliable. It even works during a flight.

- No Per-Query Cost: The AI consumes the hardware of your device. This eliminates the usage charges of cloud APIs. It is affordable so that everyone can access AI tools.

Also read: 20 OpenClaw Prompts to Automate Your Daily Life and Work

Sarvam Edge: A Deep Dive into Performance

The Sarvam Edge models are powerful but small. They are hardware-optimized on consumer hardware. They have the potential that is reflected by performance data.

On-Device Speech Recognition

Sarvam had developed a model that knows 10 large Indic languages. It is intelligent to know what language you are conversing in.

- Model Size: 74 million parameters.

- Device Footprint: ~294MB.

- Speed: It responds in under 300 milliseconds on a Qualcomm Snapdragon 8 Gen 3. It processes audio 8.5 times faster than real-time.

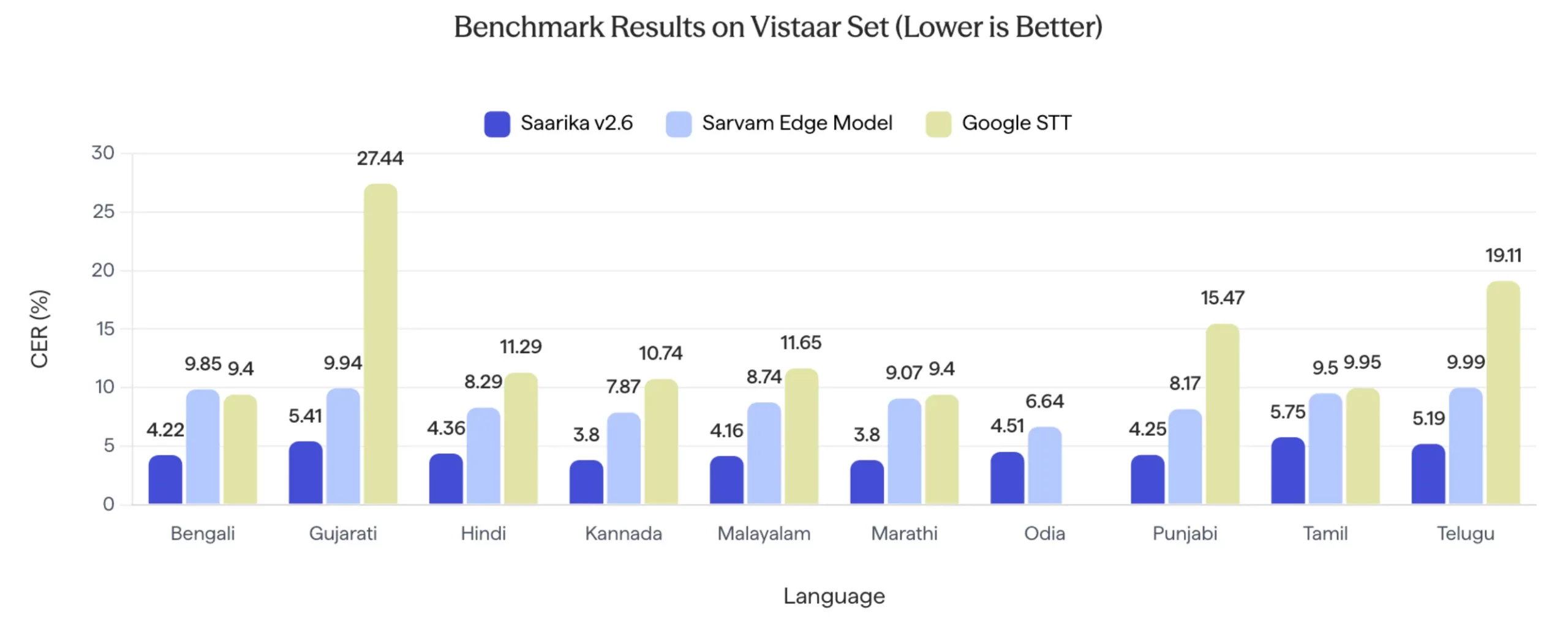

This is one of the strengths of the model. It was evaluated on the Vistaar benchmark set. The outcomes indicate that the Character Error Rate (CER) is low, and the lower the score, the better.

The Sarvam Edge model usually outperforms Google STT as indicated in the chart. It demonstrates good accuracy in such languages as Bengali, Hindi, and Punjabi. This renders it a dependable option for comprehending Indian voices.

Also read: Bulbul-V2 by Sarvam AI: India’s Best TTS Model

On-Device Speech Synthesis (Text-to-Speech)

This model produces audio that sounds natural. It serves 10 Indian languages as well as 8 voices.

- Model Size: 24 million parameters.

- Device Footprint: Just ~60MB.

- Speed: On a Samsung Galaxy S25 Ultra, it starts speaking in 260 milliseconds. It generates audio 5 times faster than real-time.

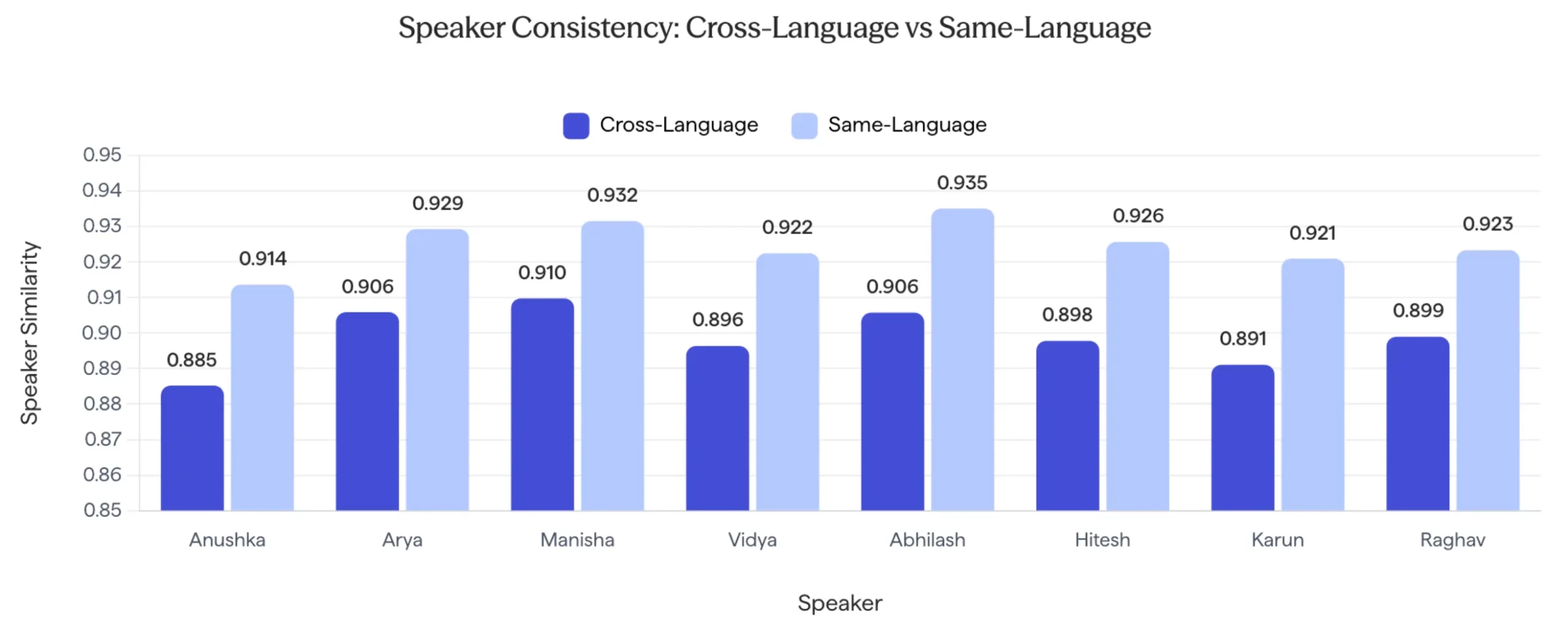

The same person will sound like a great voice model, regardless of the language. Sarvam used Speaker Similarity scores to measure this. The greater the score, the greater the consistency.

The scores on similarity are high in each speaker, as indicated in the graph. The similarity of the voice is observed when one speaks in the same language or when alternative languages are used. This produces a smooth and natural listening process.

On-Device Translation

There is one model of translations which deals with 11 languages. This consists of 10 Indic languages and English. It has the capability to translate any of these 110 language pairs directly with one another.

- Model Size: ~150 million parameters.

- Device Footprint: ~334MB.

- Speed: It provides the first translated token in about 200 milliseconds. It has a throughput of 30 tokens per second on a Snapdragon 8 Gen 3 chip.

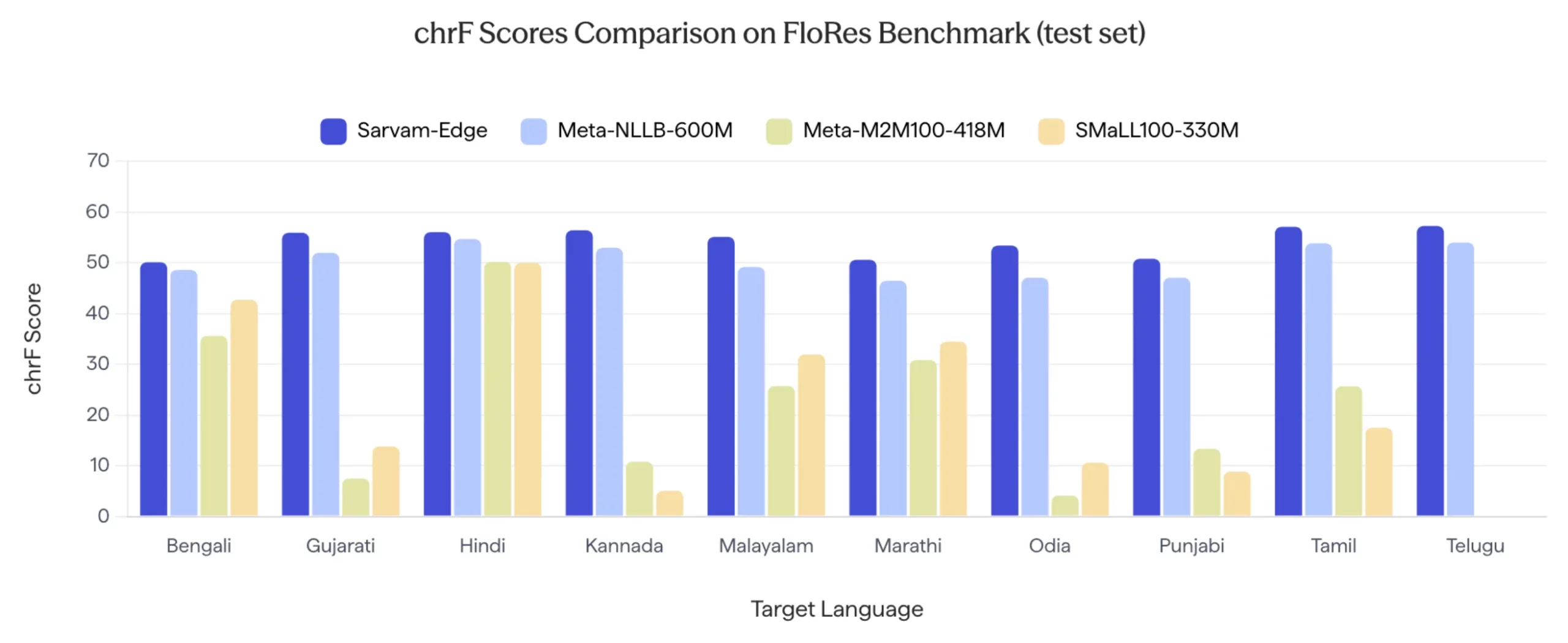

The quality of the translation was assessed based on the chrF score on the FLORES benchmark. This score determines the level of success in the translation of the original text in terms of meaning.

Sarvam-Edge model is rated higher in comparison to other most significant models, such as meeting Meta-NLLB-600M, in all the experimental languages in India. This demonstrates that it is of high quality and accuracy in the application of multilingual tasks.

Sarvam Edge in Action

Although the Sarvam Edge SDK, which is available to be utilized directly on hardware, is not yet open source, the team provided some examples of the system in practice. These demos demonstrate the practicality of the models in the day-to-day hardware.

1. Vision OCR on MacBook Pro

The first example depicts the local Optical Character Recognition (OCR) on a laptop. The system converts an image that contains Odia text into pure text when it is entirely offline. It runs at a speed of more than 40 tokens per second. Peak memory does not exceed 10 GB.

This demonstration is a big success in accessibility. Odia is a complex script. It is very optimized when handled on a normal laptop locally. The 10GB memory capacity is reasonable. It implies that the model can be executed with other applications, without the system crashing.

2. Voice-Driven Stock Brokerage on Android

Android has a financial assistant that manages stock purchases and portfolio inquiries by voice. All speech-to-text and text-to-speech functions are handled by the device. Balances can be checked, or stocks can be purchased even without an internet connection.

The most relevant factor in this case is privacy. Individuals are usually cautious about sending financial information to cloud repositories. Handling these requests locally will create trust. Also, the zero-lag experience is essential to high-paced markets where time is of the essence.

3. Real-Time Multilingual Translation

In this demo, two individuals are conversing in various Indian languages. Their speech is translated in real-time in the system. It relies on a sequence of local models for recognition, translation, and synthesis. The dialogue is not artificial, and the original meaning has been retained.

This is one huge communication issue that is solved in a nation with many languages. In translation, latency should be close to zero in order to make it feel natural. Fluid, cross-language conversations can now happen anywhere by eliminating the cloud round-trip.

Conclusion

Sarvam Edge is a significant change to the Indian AI world. It puts power in the enormous cloud servers directly in your pocket. The benchmarks demonstrate the fact that local models are fast and precise. They process complicated Indian languages at low latency and high speed. You need never wait until the end SDK begins. Currently, we can create flexible applications using hosted APIs. This is so that you can move to local processing as soon as it comes. This is a great strategic positioning. Now you have what you want right now, and that is complete privacy in the future. On-device AI will also ensure that technology is more personal and reliable for all.

Frequently Asked Questions

Its key benefits are instant responses and complete user privacy. It also works offline and has no per-query cloud costs.

The on-device models support 10 major Indic languages and English. This covers a wide range of speech and translation needs.

Direct on-device deployment is coming soon. You can build apps with the same features using Sarvam’s hosted APIs right now.

New users get ₹1,000 in free credits. After that, services have clear usage-based pricing, like ₹30 per hour for speech-to-text.

The official Sarvam AI documentation has API references and guides. It also provides information on SDKs for Python and JavaScript.

%202.jpg)