Overview

- A hands-on tutorial to build your own convolutional neural network (CNN) in PyTorch

- We will be working on an image classification problem – a classic and widely used application of CNNs

- This is part of Analytics Vidhya’s series on PyTorch where we introduce deep learning concepts in a practical format

Introduction

I’m enthralled by the power and capability of neural networks. Almost every breakthrough happening in the machine learning and deep learning space right now has neural network models at its core.

This is especially prevalent in the field of computer vision. Neural networks have opened up possibilities of working with image data – whether that’s simple image classification or something more advanced like object detection. In short, it’s a goldmine for a data scientist like me!

Simple neural networks are always a good starting point when we’re solving an image classification problem using deep learning. But they do have limitations and the model’s performance fails to improve after a certain point.

This is where convolutional neural networks (CNNs) have changed the playing field. They are ubiquitous in computer vision applications. And it’s honestly a concept I feel every computer vision enthusiast should pick up quickly.

This article is a continuation of my new series where I introduce you to new deep learning concepts using the popular PyTorch framework. In this article, we will understand how convolutional neural networks are helpful and how they can help us to improve our model’s performance. We will also look at the implementation of CNNs in PyTorch.

If you want to comprehensively learn about CNNs, you can enrol in this free course: Convolutional Neural Networks from Scratch

This is the second article of this series and I highly recommend to go through the first part before moving forward with this article.

Also, the third article of this series is live now where you can learn how to use pre-trained models and apply transfer learning using PyTorch:

Table of contents

A Brief Overview of PyTorch, Tensors and NumPy

Let’s quickly recap what we covered in the first article. We discussed the basics of PyTorch and tensors, and also looked at how PyTorch is similar to NumPy.

PyTorch is a Python-based library that provides functionalities such as:

- TorchScript for creating serializable and optimizable models

- Distributed training to parallelize computations

- Dynamic Computation graphs which enable to make the computation graphs on the go, and many more

Tensors in PyTorch are similar to NumPy’s n-dimensional arrays which can also be used with GPUs. Performing operations on these tensors is almost similar to performing operations on NumPy arrays. This makes PyTorch very user-friendly and easy to learn.

In part 1 of this series, we built a simple neural network to solve a case study. We got a benchmark accuracy of around 65% on the test set using our simple model. Now, we will try to improve this score using Convolutional Neural Networks.

Why Convolutional Neural Networks (CNNs)?

Before we get to the implementation part, let’s quickly look at why we need CNNs in the first place and how they are helpful.

We can consider Convolutional Neural Networks, or CNNs, as feature extractors that help to extract features from images.

In a simple neural network, we convert a 3-dimensional image to a single dimension, right? Let’s look at an example to understand this:

Can you identify the above image? Doesn’t seem to make a lot of sense. Now, let’s look at the below image:

We can now easily say that it is an image of a dog. What if I tell you that both these images are the same? Believe me, they are! The only difference is that the first image is a 1-D representation whereas the second one is a 2-D representation of the same image.

Spatial Orientation

Artificial neural networks (ANNs) also lose the spatial orientation of the images. Let’s again take an example and understand it:

Can you identify the difference between these two images? Well, at least I cannot. It is very difficult to identify the difference since this is a 1-D representation. Now, let’s look at the 2-D representation of these images:

Don’t you love how different the same image looks by simply changing it’s representation? Here, the orientation of the images has been changed but we were unable to identify it by looking at the 1-D representation.

This is the problem with artificial neural networks – they lose spatial orientation.

Large number of parameters

Another problem with neural networks is the large number of parameters at play. Let’s say our image has a size of 28*28*3 – so the parameters here will be 2,352. What if we have an image of size 224*224*3? The number of parameters here will be 150,528.

And these parameters will only increase as we increase the number of hidden layers. So, the two major disadvantages of using artificial neural networks are:

- Loses spatial orientation of the image

- The number of parameters increases drastically

So how do we deal with this problem? How can we preserve the spatial orientation as well as reduce the learnable parameters?

This is where convolutional neural networks can be really helpful. CNNs help to extract features from the images which may be helpful in classifying the objects in that image. It starts by extracting low dimensional features (like edges) from the image, and then some high dimensional features like the shapes.

We use filters to extract features from the images and Pooling techniques to reduce the number of learnable parameters.

We will not be diving into the details of these topics in this article. If you wish to understand how filters help to extract features and how pooling works, I highly recommend you go through A Comprehensive Tutorial to learn Convolutional Neural Networks from Scratch.

Understanding the Problem Statement: Identify the Apparels

Enough theory – let’s get coding! We’ll be taking up the same problem statement we covered in the first article. This is because we can directly compare our CNN model’s performance to the simple neural network we built there.

You can download the dataset for this ‘Identify’ the Apparels’ problem from here.

Let me quickly summarize the problem statement. Our task is to identify the type of apparel by looking at a variety of apparel images. There are a total of 10 classes in which we can classify the images of apparels:

| Label | Description |

| 0 | T-shirt/top |

| 1 | Trouser |

| 2 | Pullover |

| 3 | Dress |

| 4 | Coat |

| 5 | Sandal |

| 6 | Shirt |

| 7 | Sneaker |

| 8 | Bag |

| 9 | Ankle boot |

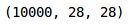

The dataset contains a total of 70,000 images. 60,000 of these images belong to the training set and the remaining 10,000 are in the test set. All the images are grayscale images of size (28*28). The dataset contains two folders – one each for the training set and the test set. In each folder, there is a .csv file that has the id of the image and its corresponding label, and a folder containing the images for that particular set.

Ready to begin? We will start by importing the required libraries:

Loading the dataset

Now, let’s load the dataset, including the train, test and sample submission file:

- The train file contains the id of each image and its corresponding label

- The test file, on the other hand, only has the ids and we have to predict their corresponding labels

- The sample submission file will tell us the format in which we have to submit the predictions

We will read all the images one by one and stack them one over the other in an array. We will also divide the pixels of images by 255 so that the pixel values of images comes in the range [0,1]. This step helps in optimizing the performance of our model.

So, let’s go ahead and load the images:

As you can see, we have 60,000 images, each of size (28,28), in the training set. Since the images are in grayscale format, we only have a single-channel and hence the shape (28,28).

Let’s now explore the data and visualize a few images:

These are a few examples from the dataset. I encourage you to explore more and visualize other images. Next, we will divide our images into a training and validation set.

Creating a validation set and preprocessing the images

We have kept 10% data in the validation set and the remaining in the training set. Next, let’s convert the images and the targets into torch format:

Similarly, we will convert the validation images:

Our data is now ready. Finally, it’s time to create our CNN model!

Implementing CNNs using PyTorch

We will use a very simple CNN architecture with just 2 convolutional layers to extract features from the images. We’ll then use a fully connected dense layer to classify those features into their respective categories.

Let’s define the architecture:

Let’s now call this model, and define the optimizer and the loss function for the model:

This is the architecture of the model. We have two Conv2d layers and a Linear layer. Next, we will define a function to train the model:

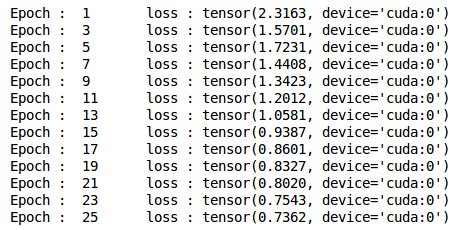

Finally, we will train the model for 25 epochs and store the training and validation losses:

We can see that the validation loss is decreasing as the epochs are increasing. Let’s visualize the training and validation losses by plotting them:

Ah, I love the power of visualization. We can clearly see that the training and validation losses are in sync. It is a good sign as the model is generalizing well on the validation set.

Let’s check the accuracy of the model on the training and validation set:

An accuracy of ~72% accuracy on the training set is pretty good. Let’s check the accuracy for the validation set as well:

As we saw with the losses, the accuracy is also in sync here – we got ~72% on the validation set as well.

Generating predictions for the test set

It’s finally time to generate predictions for the test set. We will load all the images in the test set, do the same pre-processing steps as we did for the training set and finally generate predictions.

So, let’s start by loading the test images:

Now, we will do the pre-processing steps on these images similar to what we did for the training images earlier:

Finally, we will generate predictions for the test set:

Replace the labels in the sample submission file with the predictions and finally save the file and submit it on the leaderboard:

You will see a file named submission.csv in your current directory. You just have to upload it on the solution checker of the problem page which will generate the score.

Our CNN model gave us an accuracy of around 71% on the test set. That is quite an improvement on the 65% we got using a simple neural network in our previous article.

Frequently Asked Questions

A. PyTorch is a popular open-source machine learning framework used for building and training deep learning models. It provides a dynamic computational graph, allowing for efficient model development and experimentation. PyTorch offers a wide range of tools and libraries for tasks such as neural networks, natural language processing, computer vision, and reinforcement learning, making it versatile for various machine learning applications.

A. PyTorch is an open-source machine learning library and deep learning framework primarily developed by Facebook’s AI Research (FAIR) team. It provides a Python interface for tensor computation with GPU acceleration and offers a dynamic computational graph, allowing for flexible and efficient deep learning model development. PyTorch supports various neural network architectures, automatic differentiation, and a rich ecosystem of libraries and tools for tasks like computer vision, natural language processing, and more.

End Notes

In this article, we looked at how CNNs can be useful for extracting features from images. They helped us to improve the accuracy of our previous neural network model from 65% to 71% – a significant upgrade.

You can play around with the hyperparameters of the CNN model and try to improve accuracy even further. Some of the hyperparameters to tune can be the number of convolutional layers, number of filters in each convolutional layer, number of epochs, number of dense layers, number of hidden units in each dense layer, etc.

In the next article of this series, we will learn how to use pre-trained models like VGG-16 and model checkpointing steps in PyTorch. And as always, if you have any doubts related to this article, feel free to post them in the comments section below!

Very Nice Article with proper coding and result explanation....!

Glad you liked it Manoj!

This is a great Article. looking forward to see your next article.

Hi Dhruvit, Glad you liked it! I am currently working on the next article of this series and it will be out soon. I will inform you once it is live.

I love this article. I would like to understand each of the libraries of torch.nn which you used in the building model, if you could share any documents then it would be better.

Hi Dhruvit, You can refer the following documentation to understand the nn module of torch: https://pytorch.org/docs/stable/nn.html

you should maybe explain what youre doing instead of just pasting a block of code, idiot. not all pictures are 28x28 grayscale

Hi Pajeet, I checked the data and found out that all the images are of shape 28*28. If you came across some image which is not of this shape, feel free to point out that. Also, I have tried my best to include comments in between the codes to simplify them. Even after looking at the comments, if you are unable to understand any line of code, feel free to ask it here and I will be happy to help.

It is not clear for me how we get the score of test set. I am working with custom data set. Hence is that OK that I can get the score of test set in a way that we did for validation set? Thanks a lot and I really like your way of presenting things. Great work, can't wait to see your next article.

Hi Mesay, For the test set, we do not have the target variable and hence getting the score for the test set is not possible. Hence, in order to know how well our model will perform on the test set, we create a validation set and check the performance of the model on this validation set. If the validation score is high, generally we can infer that the model will perform well on test set as well.

Great! Saves me !

Glad you liked it!

Hi Pulkit, Thank you for posting this. I just had a quick question about defining the neural network architecture. If you were working with differently sized images (say, 500 x 500), what numbers would you have to change in the neural net class? Thank you.

Hi Joseph, You have to make the changes in the code where we are defining the model architecture.

Hi Pulkit, Thanks for the wonderful blog, Can you explain how does the images size change through the convolutions conv1,conv2, with stride, padding, so that we can give the input image size to the fc?

Hi Manideep, Refer the following article where the output shapes have been explained after each layers, i.e. convolution, pooling, stride, etc.: https://www.analyticsvidhya.com/blog/2018/12/guide-convolutional-neural-network-cnn/

Hi Pulkit, I am currently working on the CIFAR 10 database (with 50 000 32*32 RGB images), so the shape of my data is 50 000, 32, 32, 3. How should I change the shape of my data to make it work ? (Euclidean norm...?) because I don't understand why you changed the shape of your data in the step "Creating a validation set and preprocessing the images" - you went from 5 400,28,28 to 5 400, 1, 28,28. Thanks for the help

Hi Georges, PyTorch requires the input in some specific format. Here is the format that you have to use: (sample_size, # of channel, width of image, height of image) So, for your case it will be (50000, 3, 32, 32)

Hi Pulkit, First of all, Thank You! This and the previous article helped me understand the PyTorch framework. While implementing the code, I came across an issue. While running this code: # defining the number of epochs n_epochs = 25 # empty list to store training losses train_losses = [] # empty list to store validation losses val_losses = [] # training the model for epoch in range(n_epochs): train(epoch) I got this error: RuntimeError Traceback (most recent call last) in 7 # training the model 8 for epoch in range(n_epochs): ----> 9 train(epoch) in train(epoch) 8 # converting the data into GPU format 9 if torch.cuda.is_available(): ---> 10 x_train = x_train.cuda() 11 y_train = y_train.cuda() 12 x_val = x_val.cuda() RuntimeError: CUDA out of memory. Tried to allocate 162.00 MiB (GPU 0; 4.00 GiB total capacity; 2.94 GiB already allocated; 58.45 MiB free; 7.36 MiB cached) How to solve this error?

Hi Neha, The error specifies that you need more RAM to run the codes. You can try these codes in google colab.

Hi, I want to ask about train() function. In your code, you used model.train() for training. In some resources on the internet, they trained by using for loop. What is the differences between using model.train() and for loop? Does model.train() trains exactly or not? I am confused about this situation. If I use for loop and iterating for each batch, it takes almost 3-4 minutes to produce loss values on my dataset. But if I use model.train(), it takes only 1 second to produce loss values. can you explain this situation? I searched on the internet but I did not understand very well.

Hi, model.train() is for single epoch. I have also used a for loop to train the model for multiple epochs. If you just pass model.train() the model will be trained only for single epoch.

Hi Pulkit!! Really a very nice blog on deep learning using PyTorch . I am working in Google Colab , but I am unable to read the images saved in a zip file of my local system. Please suggest how to read the images from zip file in Google Colab.

Hi Pulkit, Hats off for your effort. However I wwanted to highlight a nasty bug which I had to troubleshoot while trying to run your code in my local machine. The error message is as follows: Expected object of device type cuda but got device type cpu for argument #2 'target' in call to _thnn_nll_loss_forward This comes while trying to calculate the losses. # computing the training and validation loss loss_train = criterion(output_train, y_train) loss_val = criterion(output_val, y_val) In order to troubleshoot the targets need to be converted to long tensor. y_train = y_train.type(torch.cuda.LongTensor) # -- additional y_val = y_val.type(torch.cuda.LongTensor) # -- additional # computing the training and validation loss loss_train = criterion(output_train, y_train) loss_val = criterion(output_val, y_val) It is also important to highlight the the type is .cuda.LongTensor otherwise we will encounter a deviec mismatch error. Thanks again for your effort. :)

Thank you for sharing.

Hi, thanks for the great tutorial, and also for this comment... I came across the same error message, and since I am running the examples on CPU, it wasn't possible to use the torch.cuda.LongTensor type conversion Instead, it was possible to use the long() function on the tensor directly # Instead of # y_train = y_train.type(torch.cuda.LongTensor) y_train = y_train.long() # and instead of # y_val = y_val.type(torch.cuda.LongTensor) y_val = y_val.long()

Hey Pulkit, Thank you for the guide, i just finished lerarning the basics about this subject and this helps me practice. I have a question tho, is it ok to make the number of outputs be 3x the size of the number of inputs? I want to make a nn that given a greyscale image returns rgb colored image thus i guess i would need x3 for the three channels? Thanks in advance. Kind regards, Milorad

Hi Milorad, The problem that you are trying to solve is not an image classification problem. You are trying to change the grayscale images to RGB images. People generally use GANs for such problems.

Hi, Why are you not using model.eval() when you are validating each epoch?

Hi Pulkit . Thank you for your sharing, but I have a confusion in your article and need your help. In your code: # loading training images train_img = [] for img_name in tqdm(train['id']): # defining the image path image_path = 'train_LbELtWX/train/' + str(img_name) + '.png' # reading the image img = imread(image_path, as_gray=True) # normalizing the pixel values img /= 255.0 # converting the type of pixel to float 32 img = img.astype('float32') # appending the image into the list train_img.append(img) In normalize the pixel values, inherently the images were scale [0,1] not [0,255] and we do not to divide by 255. I hope you can explain more about it. Thank you so much.

Thanks for great Tutorial. I am searching for some Pytorch model suited for corner detection (Fine-tuning it for corner detection). Can you guide me , Thanks in advance!

Hi Pulkit, I am not able to get the CSV files of data set. On the link which is given .gz file are available .Can you please share the csv files for this problem. Thanks Jayana

Thank you so much for the tutorial. However, can you please clarify what do '1' and '4' in the Conv2d layer refer to, and '4' in BatchNorm2d too. Thanks! self.cnn_layers = Sequential( # Defining a 2D convolution layer Conv2d(1, 4, kernel_size=3, stride=1, padding=1), BatchNorm2d(4), ReLU(inplace=True), MaxPool2d(kernel_size=2, stride=2), # Defining another 2D convolution layer Conv2d(4, 4, kernel_size=3, stride=1, padding=1),

Hi Pulkit, Thanks for the great explanation. I'm struggling to comprehend how you got the dimensions of the linear layar: >> Linear(4 * 7 * 7, 10) Can you please elaborate on that? Regards, Kartikeya

Hi Pulkit, Thanks for the explanation. I'm struggling to understand how you go the dimensions for the linear layer? >> Linear(4 * 7 * 7, 10) Can you please elaborate on this?

/Hi, Thanks for your wonderful blog. It has been a real help to learn the pytorch implementation of image classification problems. I had a doubt. If I reduced the overall number of images in the training set, would there be any changes to the code ? I am doing the same code with an input of just 1000 images(training+validation).Please advice. I am getting the following error when am doing so - RuntimeError: size mismatch, m1: [25200 x 7], m2: [196 x 10] at /pytorch/aten/src/TH/generic/THTensorMath.cpp:136

Hi pullit sharma.... I really like your tutorial. Its amazing... I have question Sir , that can we use convolutional layer at the final layer of CNN instead of fully connected layer ?

Hi Pulkit, where should I place the data files? I don't want to use a path to my /Downloads/ directory. I am using programs provided under Anaconda.

Hi, great article. One thing that would be helpful is that if you could generalize your code for min-batch processing. Or you can just comment here on how to update the code for that. Many thanks!

On: Line 2846 of ~\anaconda3\lib\site-packages\torch\nn\functional.py in cross_entropy(input, target, weight, size_average, ignore_index, reduce, reduction, label_smoothing) >>> results in the following error RuntimeError: expected scalar type Long but found Int The above is called from line 1150 of ~\anaconda3\lib\site-packages\torch\nn\modules\loss.py in forward(self, input, target) which in turn is called from line 1102 of ~\anaconda3\lib\site-packages\torch\nn\modules\module.py in _call_impl(self, *input, **kwargs) Please help as there is now way for me to resolve this!

Dear Pulkit, Thank you for sharing such an understandable CNN algorithm! While running the code I accounted the following problem (after the training started): # training the model for epoch in range(n_epochs): train(epoch) The error: --------------------------------------------------------------------------- RuntimeError Traceback (most recent call last) in 7 # training the model 8 for epoch in range(n_epochs): ----> 9 train(epoch) in train(epoch) 21 22 # computing the training and validation loss ---> 23 loss_train = criterion(output_train, y_train) 24 loss_val = criterion(output_val, y_val) 25 train_losses.append(loss_train) ~\anaconda3\lib\site-packages\torch\nn\modules\module.py in __call__(self, *input, **kwargs) 530 result = self._slow_forward(*input, **kwargs) 531 else: --> 532 result = self.forward(*input, **kwargs) 533 for hook in self._forward_hooks.values(): 534 hook_result = hook(self, input, result) ~\anaconda3\lib\site-packages\torch\nn\modules\loss.py in forward(self, input, target) 914 def forward(self, input, target): 915 return F.cross_entropy(input, target, weight=self.weight, --> 916 ignore_index=self.ignore_index, reduction=self.reduction) 917 918 ~\anaconda3\lib\site-packages\torch\nn\functional.py in cross_entropy(input, target, weight, size_average, ignore_index, reduce, reduction) 2019 if size_average is not None or reduce is not None: 2020 reduction = _Reduction.legacy_get_string(size_average, reduce) -> 2021 return nll_loss(log_softmax(input, 1), target, weight, None, ignore_index, None, reduction) 2022 2023 ~\anaconda3\lib\site-packages\torch\nn\functional.py in nll_loss(input, target, weight, size_average, ignore_index, reduce, reduction) 1836 .format(input.size(0), target.size(0))) 1837 if dim == 2: -> 1838 ret = torch._C._nn.nll_loss(input, target, weight, _Reduction.get_enum(reduction), ignore_index) 1839 elif dim == 4: 1840 ret = torch._C._nn.nll_loss2d(input, target, weight, _Reduction.get_enum(reduction), ignore_index) RuntimeError: expected scalar type Long but found Int --- All the previous computations were just fine. Do you know what an issue with the "scalar type Long "? -- Thank you, With kind regards, Antisha

Once you put the model into .eval() mode, it performs near random chance. I'm not sure, but I think almost all of the training is going into the non-deterministic batch normalization layers, and none or little into the conv2d layers, so the model is erratic and not useful. Additional time spent on architecture for this tutorial so a performant end result can be found.