This article was published as a part of the Data Science Blogathon.

Introduction

The Machine Learning life cycle or Machine Learning Development Life Cycle to be precise can be said as a set of guidelines which need to be followed when we build machine learning-based projects. The ML life cycle helps to build an efficient machine learning project. The main purpose of the life cycle is to find a solution to the problem or project.

In this article, we will have a detailed study on what is meant by machine learning life cycle and what steps we must need to follow to implement machine learning life cycle. A beginner always thinks that making a machine learning project is just about finding the accuracy of the model or performing EDA(Exploratory Data Analysis). But when it comes to real-life products there is a lot of stuff which needs to be considered to make an end-to-end application. So let’s see what are various steps involved in making real-world machine learning projects.

Framing the Problem

- This is the basic as well as an essential step in developing a machine learning project.

- In this step, we must figure out what is the problem statement of our project so that we can get to know every parameter of the problem.

- Things to consider are what is the actual problem we are trying to solve, members in teams, how can we approach that solution, the cost involved, how can we get data, an algorithm which can be used, a framework to be used, where to deploy model, etc.

After this all things are figured out properly, then only we can proceed to further steps.

Gathering Data

For college students, data is easily available from different websites most probably kaggle but in the case of companies, it is a different scenario.

There are different ways for companies to collect data:-

- APIs- Hit the API using python code and fetch data in json format.

- Web Scrapping- Sometimes data is not publicly available i.e., it is on some website so we need to extract it from there. For eg-Trivago uses this method to collect hotel prices data from every website

- Data Warehouse- Data is also stored in databases. But this data cannot be directly used as it is running data. So data from the database is stored in a data warehouse and then used.

- Clusters– Also data are sometimes in tools like the spark in the form of clusters basically big data so data is fetched through these clusters.

Data Preprocessing

- Data which is gathered is most likely to be uncleaned i.e., structural issues, missing values, outliers, noisy data, etc.

- This data can directly not be used in the machine learning model. So we need to preprocess this dirty data so that the data gathered can be useful.

- Preprocessing involves removing duplicates, missing values, outliers, and scaling values.

- Basically, by preprocessing we need data in a format which can be useful for machine learning models.

Exploratory Data Analysis

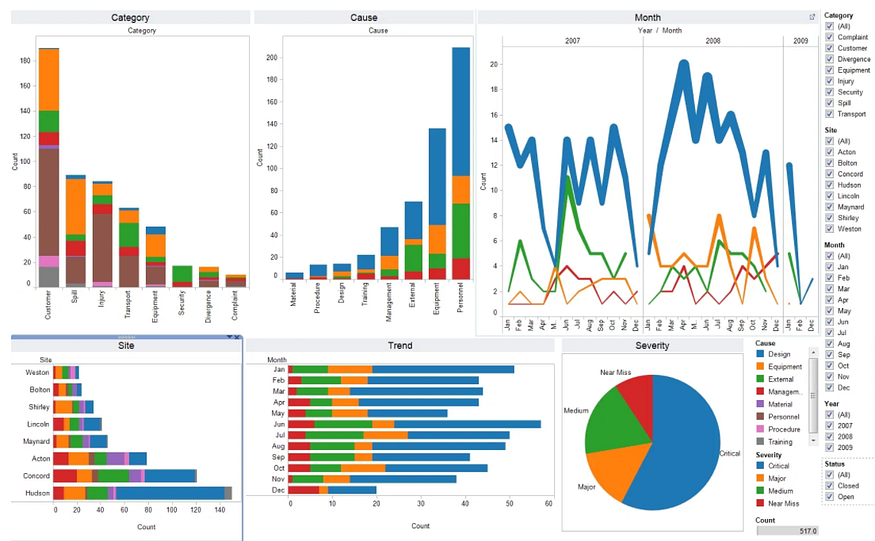

- By seeing the name it is clear that we analyze the data by seeing the relationship between input and output.

- This stage gives data insights by visualizing data using charts, graphs, outlier Detection, and univariate analysis i.e., independent analysis of each column(mean, standard deviation), bivariate analysis i.e., analysis of two columns, etc.

- The whole idea behind this stage is to get a concrete idea about data.

- It is also important to stage as it gives clear insights into data.

- The more time we spend on EDA the more we get to know about data which helps in decision making while implementing the model.

Feature Engineering and Selection

- Features mean input columns. The whole idea is to create new columns in data by using existing columns or to make intelligent changes in existing columns to make analysis easier.

- Feature selection is to select only particular columns which are necessary for implementing the model.

- Sometimes it may happen there are many columns in data but we only require the few essential columns which are necessary for the model.

- So in such a case, we will select only those few columns.

Model Training

- Once we are sure about the data now we want to use this data to train our model. Training a model is required so that it can understand the various patterns, rules, and, features.

- We train the model by using various algorithms and then evaluate algorithms by different metrics like accuracy score, mean squared error, etc.

- The best model is selected and parameters are tuned so that performance of the model gets improved.

- It is also known as Hyper Parameter Tuning.

Model Testing

- Once our machine learning model has been trained on a given dataset, then we test the model.

- In this step, we check for the accuracy of our model by providing a test dataset to it.

- Testing the model determines the percentage accuracy of the model as per the requirement of the project or problem.

Model Deployment

- In this step, we deploy the model in the real-world system. If the above-prepared model is producing an accurate result as per our requirement with acceptable speed, then we deploy the model in the real system.

- But before deploying the project, we will check whether it is improving its performance using available data or not.

- For deployment, we can use Heroku, AWS, Google cloud platform, etc. Now our model is online and serves user requests.

For an individual who is working on a personal project or college project, these are the complete steps.

The next 2 steps are used by companies.

A.Testing App/Software:

- In this step, the companies roll out alpha/beta versions of the deployed model to a particular group of users or clients to check whether the model is working as per requirement.

- The feedback is collected from these users and then worked upon. If the model is working correctly then it is rolled out to everyone.

B.Optimize:

In this stage companies use servers to take backup of the model, backup of data, load balancing(service the request if many users are requesting), rotting(frequently re-training model as data evolves with time). This step is generally automated.

Conclusion

It is very important for any individual to follow all the steps in the lifecycle. By following these steps all the necessary requirements are collected and executed with proper planning (by performing EDA and feature engineering). These steps ensure that the machine learning model is well optimized and it gives the correct or desired results.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.