This article was published as a part of the Data Science Blogathon

Introduction

Naive Bayes is a powerful tool that leverages Bayes’ Theorem to understand and mimic complex data structures. In recent years, it has commonly been used for Natural Language Processing (NLP) tasks, such as text categorization. Today, we will be constructing a Naive Bayes text classifier for topic categorization.

Before we move forward with the explanation, I want to emphasize that Naive Bayes is not the traditional method of classifying topics. In fact, there are other models invented for the specific purpose of classifying topics – such as Blei’s landmark Latent Dirichlet Allocation. But although Naive Bayes will be entering a pretty competitive market in topic categorization, its simplicity and easily accessible mathematical foundation make it a unique tool for developers.

Without any further ado, let’s get Naive.

Packages and Datasets

For this program, we will need to make use of only 2 packages: pandas and textmining. The former will help us input our data, while the latter will format the data in a text-document matrix.

import textmining import pandas as pd

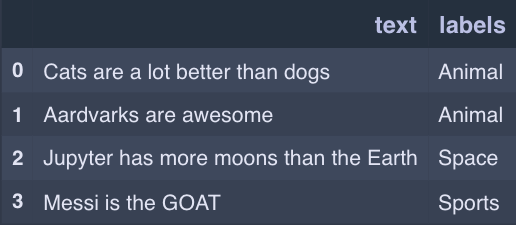

Now, let’s bring in the actual data. My dataset is composed of two columns: one named “text” and the other named “labels.” That dataset is confidential for my research project, but here’s an example of what your dataset might look like.

Here’s the code for entering your data and separating it into training and evaluation.

df = pd.read_csv("my_data.csv")

train_df = df.iloc[1:1500]

eval_df = df.iloc[1501:2000]

Notice how I have 2000 rows – a relatively small dataset size – but I still reserve 25% of my samples for validation. This is an industry standard with many forms of natural language processing, so I encourage you to do the same.

Now, let’s put that textmining package we imported to use! The textmining package is extremely simple to use, so we only need 3 lines of code to make our first term-document matrix.

#Create document term matrix

tdm = textmining.TermDocumentMatrix()

for ele in train_df["text"]:

tdm.add_doc(ele)

What is a term-document matrix, you ask? It’s a document where every column represents one word in the corpus, and every row contains the number of occurrences of each word in a document. Here’s an example:

Term-Document Matrices

That term-document matrix represents the occurrences of every word in every document in the corpus. What we also need is a term-document matrix specific to each of the topics in our dataset. This will help us get the vital probability of seeing a word given a topic.

topicSpecTDM = {} #Array of label:TDM entries

list_of_labels = ["Animal","Space","Sports"]

for ele in list_of_labels:

sdf = train_df.loc[ele == train_df["labels"]] #All docs of that label

stdm = textmining.TermDocumentMatrix()

for row in sdf["text"]:

stdm.add_doc(row) #Add all docs to the TDM

topicSpecTDM[ele] = stdm

So, topicSpecTDM is a dictionary where each key is a topic and each value is the specific TDM for that topic.

Next, we’re going to try and find the relative frequencies of each word in each topic. The data structure we’re going to create will be indexed to find the probability of seeing a word given a topic as I mentioned earlier.

#Get topic term frequencies (observations)

topic_term_freq = {} #Dictionary of topic_name:term_frequency_dictionary entries

for ele in list(topicSpecTDM.keys()):

topic_tdm = topicSpecTDM[ele] #Get the specific TDM for that topic

topic_spec_term_count = {} #Instantiate a dictionary for term frequencies (not a list)

flag = 1

for row in topic_tdm.rows(cutoff=1):

if flag == 1:

for word in row:

topic_spec_term_count[word]=0 #Instantiate 0 for all words

flag=0

else:

ordered_keys = list(topic_spec_term_count.keys()) #Get list of words

for count in range(len(row)):

key = ordered_keys[count]

#print(topic_spec_term_count[key])

topic_spec_term_count[key]+=row[count] #Add to word count

topicSum = sum(list(topic_spec_term_count.values()))

#Conver from counts to frequencies

for item in list(topic_spec_term_count.keys()):

topic_spec_term_count[item]/=float(topicSum)

topic_term_freq[ele]=topic_spec_term_count

This is a great time to look at what Bayes’ Theorem will ultimately help us accomplish.

Bayes Theorem Itself

Here’s Bayes’ Theorem for you, in case you’ve forgotten. The value denoted as p(topic) is the prior, and the left-hand side of the equation is the posterior likelihood – or the thing we’re trying to maximize.

Now, you may be asking: how are we supposed to get p(sentence|topic)? That seems like a crucial and unsolvable aspect of the problem!

And you’re right. To do this, we have to assume that statisticians would probably not be so excited about it.

The probability of seeing a sentence given a topic is the product of the probability of seeing a word given a topic, aggregated for all words in the sentence.

But that’s hideous, right? This scheme entirely ignores the position of each word and the relative importance of each word.

Well, first of all, that’s why it’s called NAIVE Bayes instead of METICULOUS Bayes.

Second of all, it turns out that things like relative importance aren’t a barrier to the success of this model, mainly because of how strong frequencies work. Naive Bayes is a great tool for many applications, and its naivety has not stopped its growth.

In our final step, we’re going to maximize the numerator of the right-hand side (which we can calculate) to maximize the left-hand side (which we can’t calculate). Note that the denominator of the right-hand side is a constant, so we can ignore it.

Maximum-Likelihood Estimation

Here’s our code for maximizing the posterior likelihood of a topic given the words in a sentence. Enjoy!

'''

Bayesian Maximum Likelihood Estimation

Multiplying the probability of seeing a topic by the probability

of that topic generating the specific word

Choosing the topic that maximizes the posterior probability

Returning the best topic and best probability

'''

def MLE(tweet):

best_topic = "None Found"

best_prob = 0

for topic in list_of_labels:

spec_df = train_df.loc[train_df["labels"]==topic]

prior = float(spec_df.shape[0])/train_df.shape[0] #Prior probability of seeing a topic

prob_of_words = 1.0

for word in tweet.split():

prob_of_words = prob_of_words * topic_term_freq[topic][word] #Multiply with new probability

if prob_of_words*prior > best_prob:

best_topic=topic

best_prob=prob_of_words*prior

return best_topic, best_prob

All in all, Naive Bayes Classifiers like the one we constructed are a simple but powerful tool for supervised learning. Unlike generative models like LDA, which actually generate topics based on the co-occurrences of words in datasets, Naive Bayes allows you to make use of your own labels, which is a very powerful thing.

Final Thoughts

Consider, for example, a training dataset that has already been labelled by a human annotator. We want to make sure our model makes use of these labels instead of just generating its own topics. Not only does this ensure that the annotator can see the fruits of his/her labour, but it also ensures that the topics we see are the topics we want. If the general goal of our research study is to identify the change in the frequency of sports-related tweets over June, we want to ensure that the topic model outputs that sports topic. Naive Bayes gives us this assurance.

If you’re looking to get more advanced, try adding a text processor to your data! Cleaning your script to remove links and stray punctuation marks can go a long way in saving storage space, and it can ensure that your model focuses on the right words. Lemmatization – the process of converting all instances of a word to the same root form – will also help make sure that “swim” and “swimming” aren’t two different words in your TDM (as an example). Additionally, consider using a stopwords package and TF-IDF algorithm to filter out unnecessary words.

I hope you’ve enjoyed this introduction to Naive Bayes! Topic classification and topic modelling are areas of growing importance in the Natural Language Processing world, especially for social science researchers.