This article was published as a part of the Data Science Blogathon

In my case, I was first introduced to Docker when I have to containerize a Machine Learning workflow in my organization. At that time I realized that Docker makes Machine Learning Engineers’ lives much easier. Not only that we can deploy and run applications using Docker, but also it ensures that our applications work exactly in the same environment in other systems also. This is important for any Data Science and Machine Learning application that the program must ensure reproducible results, Docker provides the solution by sharing the environment, libraries and drivers. So, let’s dive into it.

List of contents

- Introduction to Docker

- Docker architecture elements

- Docker Installation

- Docker CLI used to run image

- Create a docker system image for a sample ML project

- Run Docker System image inside a container

Introduction to Docker

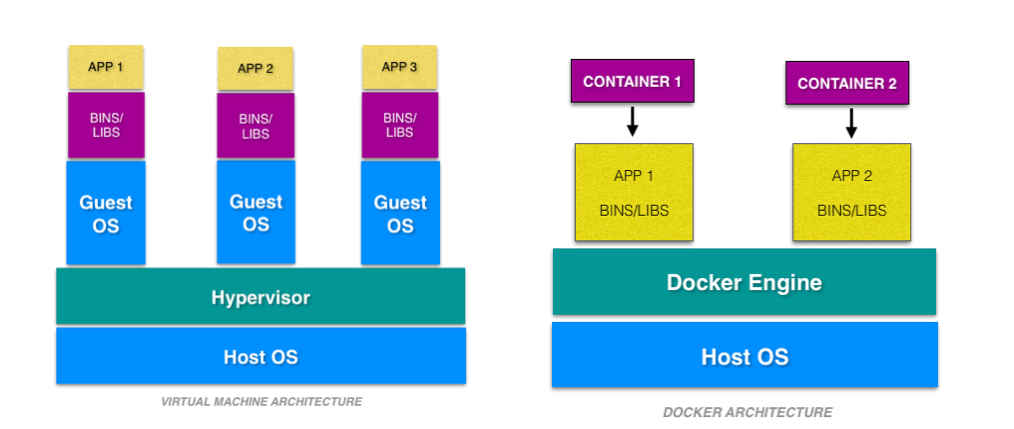

Earlier we are used to using Virtual Machines to run different apps in a system. A virtual machine runs a separate guest operating system with access to host resources through a Hypervisor. Each VM runs a separate guest OS (like Linux or Windows) irrespective of the host OS. The main drawbacks of running the VMs are – each guest OS has its separate running kernel which used many resources for its libraries and dependencies, VM usually takes usually long time to start its own guest OS, and deployment can be a little lengthy for VMs.

Image Source: https://cloudacademy.com

So, what does Docker offer us to overcome the drawbacks?

Docker is a virtualization technology that helps to easily deploy and manage applications by using containers. A container usually shares the kernel of the host machine with other containers. Containers are lightweight, isolated from each other, and bundle their own software, libraries, and dependencies together. Containers are lightweight because unlike VMs they don’t run individual Guest OS, which also makes them quick to start. To create, execute and manage the containers we used Docker as a management application.

Docker Architectural Elements

Docker Engine – Docker engine is used for containerization of applications. This is a client-server application with a long-running daemon process, API interface to communicate with daemon and CLI clients.

Docker daemon – Docker uses a client-server architecture where clients send responses to the docker daemon. Docker daemon is a continuous runtime process that manages the containers. Docker daemon receives the API requests and manages different objects such as containers and images.

Images – Docker image is a file that contains different application codes, libraries, and dependencies needed to make an application run. Image file of docker is a read-only template with instructions that can create a Docker container. To build our own customized image file, we need to create a dockerfile by defining the steps needed to create the image and execute it.

Docker Registry – After the Docker image has been created, we can share it in some open platform or some private registry so that our application can be shared with others or can be restricted based on the requirement. We can also push the image somewhere like DockerHub, AWS ECR, etc so that it can be used further.

Containers – Container is the runnable instance of an image and we can create, start or stop a container using Docker. The containers are –

- Lightweight – Containers share the system kernel, so they don’t need any separate OS to run.

- Secure – All containers are isolated so applications running inside them are safe.

- Standard and Portable – Containers are portable between registry servers and container hosts.

Docker Installation

To install docker one can use the following links and proceed with the procedure described here.

For Windows User Click Here

For Mac User Click Here

Docker CLI and Use

After installation of Docker, one can be sure of installation from the command prompt. The output of the command “docker” will be something like this –

If the docker is ready, we can download any of the pre-uploaded images from DockerHub, which is an open docker image repository. Let’s try with a simple image, log in to Docker hub and search for the image, hello-world (look for an official image to avoid any problem). Now, pull this image using the command in the CLI

docker pull hello-world

It will pull the latest image to your system

If you want to check the docker images in your system, run the below command in the CLI

docker image ls -a

Now, let’s try to run one of the docker images. We have already downloaded the “hello-world” image from the docker hub, to run it, we have to run the command –

docker run hello-world

This gives the output

Create a Docker image for a sample project

Now let’s have an example of containerizing a very simple machine learning code. For a starter, I have created an elementary Machine Learning Model for predicting salary using Linear Regression Model (The code is available here in my personal git repository). Before containerizing your code you need to create a Dockerfile. Dockerfile is a text file that contains all the necessary commands a user needs to give to create a docker image. A user can run the docker build command to execute a successive number of commands necessary to create an image, docker build command runs the command from Dockerfile and creates the image then after. For example, I have run the following command to execute a docker build

docker build -t new_image .

The output of the same is down below for your reference:

You can check the docker image from CLI also by using the command –

docker image ls

In the above output, we can see that we have successfully created a docker image for the project.

Run the Docker image inside a container

Now, we have the docker image, so how we can run this image in a container? The container has its own file system, networking, and isolated processes. To run a docker image inside a container we have to use the below command –

docker run -d -p 8000:8000 new_imageSimilarly, to stop a container from running we can use the command

docker stop Since we run the containers we also need to confirm if the container is running or how many containers are started or exited and we use the below command (docker ps -a) to confirm the same.

We can see the two images which were running on two different containers and their container ids are also listed in the first column of the output.

Conclusion

Docker has many benefits. One of the most common reasons to use docker is after we test our application in the containerized environment we can deploy it in any other system where docker is running. The programmer can be assured that a containerized application will not fail in any other system running the docker system.