Introduction

DuckDB is designed to support analytical query workloads, also known as Online analytical processing (OLAP).” The duck was chosen as the mascot for this database management system (DBMS) because it is a very versatile animal that can fly, walk and swim. In short, it is designed to be your DBMS for local analysis. So let’s see what features it has to offer.

Structured Query Language,SQL or “sequel” for the more intimate, is a fundamental language for anyone who wants to deal with databases.

Some time ago, I was researching how to use SQL in python, so I found this wonderful solution called DuckDB. In my opinion, the best parts of this tool are the ability to read CSV and Parquet files directly in the query and its excellent performance.

It is also worth remembering that DuckDB focuses on analysis activities rather than transactions like SQLite.

DuckDB Features

- Simple installation

- Embedded: no server management

- Processing and storing tabular datasets, e.g. from CSV or Parquet files

- Single file storage format

- Fast analytical processing

- Fast transfer between R/Python and RDBMS

- Interactive data analysis, e.g. Joining & aggregate multiple large tables

- Concurrent large changes to multiple large tables, e.g. appending rows, adding/removing/updating columns

So, let’s see what you will learn in this article!

font: https://duckdb.org/why_duckdb

What will you Learn?

In this article, you will learn how to install, import the DuckDB, read and write CSV and Parquet files, the Meta Queries and DBeaver SQL IDE; in short:

- Getting started

- Import and export CSV and parquet files

- Meta queries

- DBeaver SQL IDE

Getting Started with DuckDB

So enough talk, and let’s get our hands dirty with the code 😉

Installing DuckDB in your system:

Import and connect to a database:

import duckdb

import pandas as pd

con = duckdb.connect()

df = pd.DataFrame({'Column 1' : [100]})

con.execute("SELECT * FROM df").df() # query the Pandas DataFrame "df"Import and Export Files

So let’s start with the data scientist’s great friend, the CSV file.

Load a CSV file into a table using the read_csv_auto function:

con.execute("SELECT * FROM read_csv_auto('my_local_file.csv')").df()

Create a new table:

con.execute("CREATE TABLE tbl AS SELECT * FROM read_csv_auto('my_local_file.csv')")

Export the data from a table to a CSV file:

con.execute("COPY tbl TO 'my_export_file.csv' (HEADER, DELIMITER ',')")

If you have not been introduced to him yet, it is time to meet him. The Parquet file is essential when working with a large amount of data; we won’t go into detail in this article regarding its advantages, but see at this point that working with this file format with DuckDB is quite simple and easy.

Load a Parquet file into a table using the read_parquet function:

con.execute("SELECT * FROM read_parquet('my_local_file.parquet')").df()

Create a new table:

con.execute("CREATE TABLE tbl5 AS SELECT * FROM read_parquet('my_local_file.parquet')")

Export the data from a table to a Parquet file:

con.execute("COPY tbl TO 'my_export_file.parquet' (FORMAT PARQUET)")

Ok! But how about the huge table? Let’s talk about it in the next section!

Meta Queries

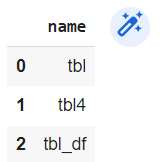

Well, it can be very convenient to list them all after creating several tables, can’t it? We can do this with SHOW TABLES.

con.execute("SHOW TABLES").df()

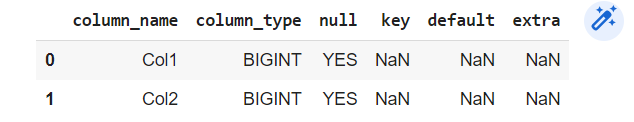

To view the schema of a table, use DESCRIBE followed by the table name.

df = pd.DataFrame({'Col1' : [100,90,30],'Col2' : [1,5,8]})

con.execute("CREATE TABLE tbl_df AS SELECT * FROM df")

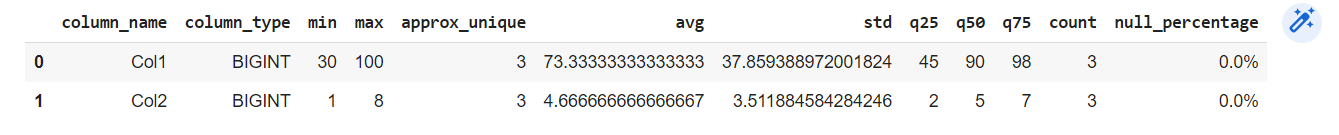

In my opinion, the most useful command is SUMMARIZE; it returns the column name, column type, minimum value, maximum value, the number of unique values, the mean, the standard deviation, the quartiles, the number of values, and the percentage of null values. A lot of information with a single command!

con.execute("SUMMARIZE tbl").df()

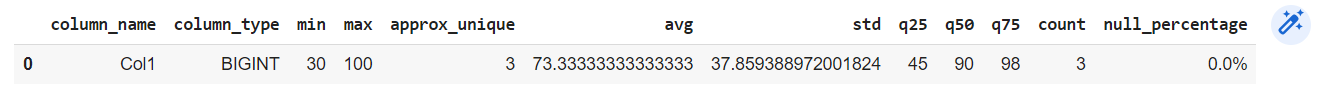

In reality there is so much information that sometimes we don’t want it, or it can be difficult to see. We can use the SUMMARIZE command in conjunction with SELECT to get a summary of only the desired columns, for example.

con.execute("SUMMARIZE SELECT Col1 FROM tbl").df()

Well, If you like SQL and Python, then you must give DuckDB a try!

DBeaver SQL IDE

“DBeaver is a powerful and popular desktop sql editor and integrated development environment (IDE). It has both an open source and enterprise version. It is useful for visually inspecting the available tables in DuckDB and for quickly building complex queries. DuckDB’s JDBC connector allows DBeaver to query DuckDB files, and by extension, any other files that DuckDB can access (like parquet files).”

If you have liked DubkDB, you will love this IDE; it is straightforward and lightweight. In this section, we are going to install, connect, and create a database in memory, local, and you know what? Let’s create, read and save a parquet file!

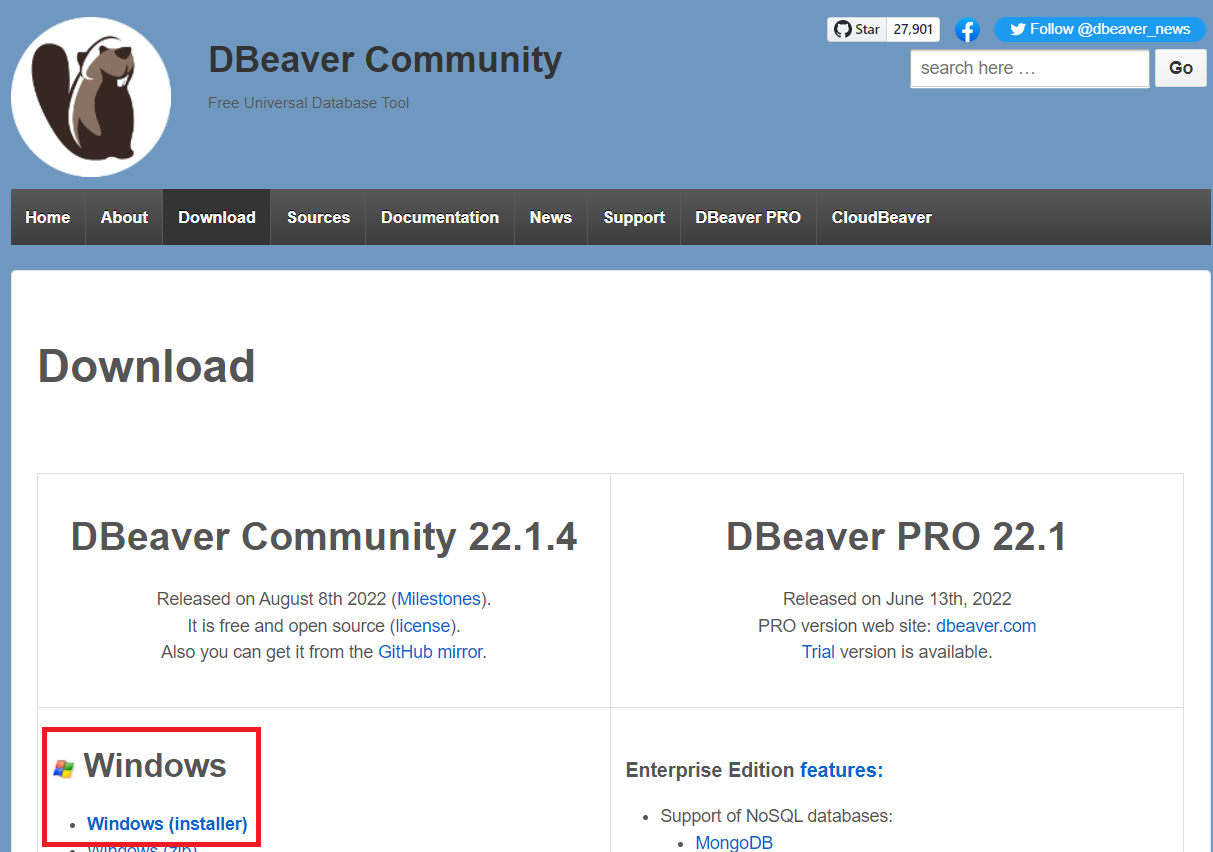

So let’s go! In this tutorial, I will show you how to install the Windows version: the download page.

At the beginning of the installation, you can choose your preferred language:

DBeaver Community:

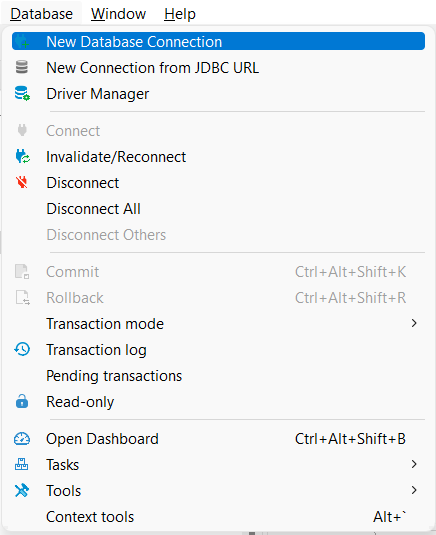

Now, in the Database menu, click on New Data Base Connection:

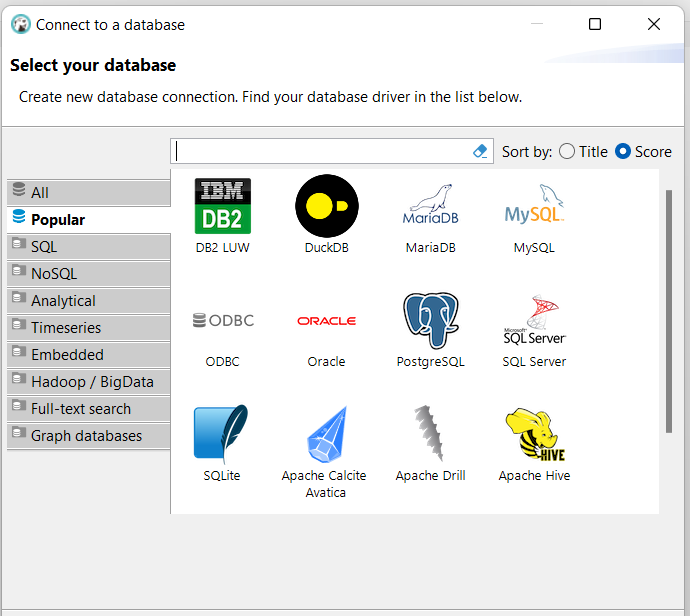

Guess which one we will choose now? Sure! DuckDB, isn’t it?

On the “Path” to start playing, we can fill in with “:memory:”:

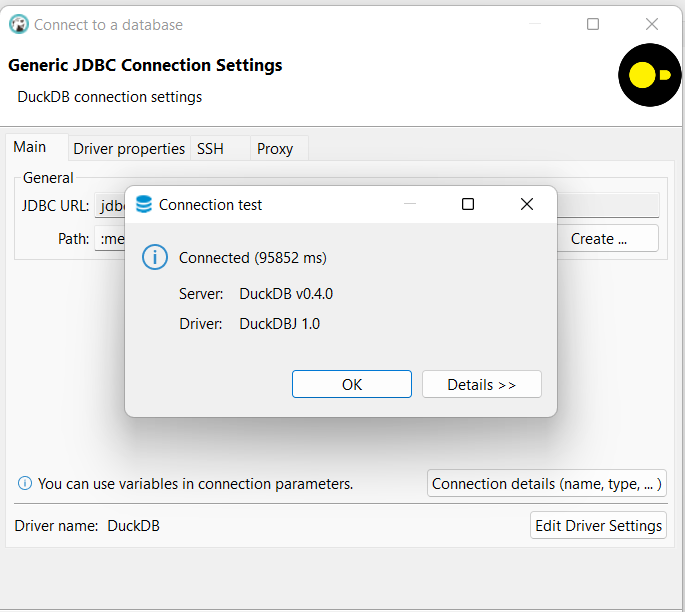

Click on the button “Test Connection…”:

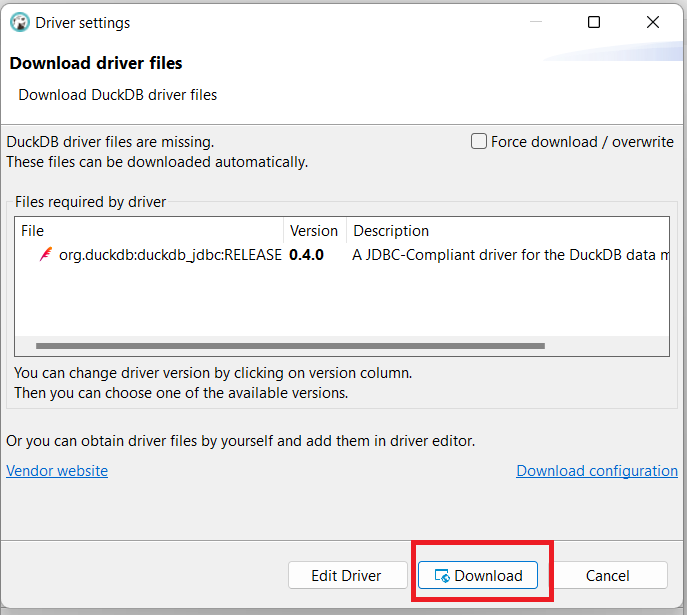

Click “Download” to download DuckDB’s JDBC driver from Maven. Once the download is complete, click “OK”, then click “Finish”:

If you got this message, the connection was successful. Congratulations!

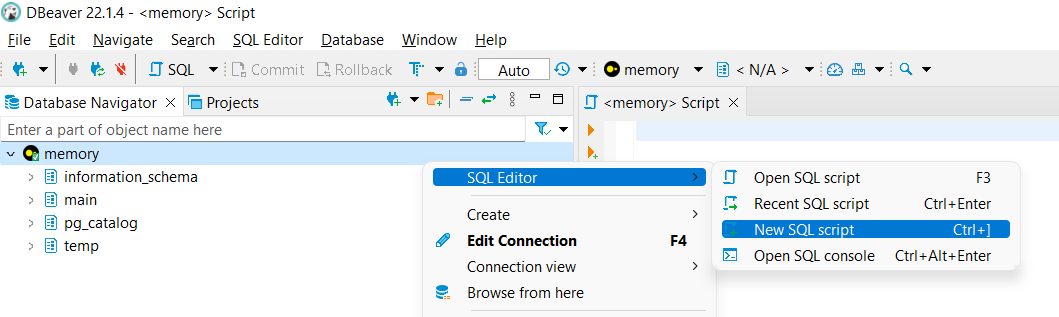

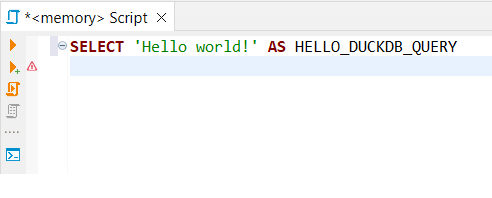

The big moment of our “Hello World!” in DBeaver SQL IDE:

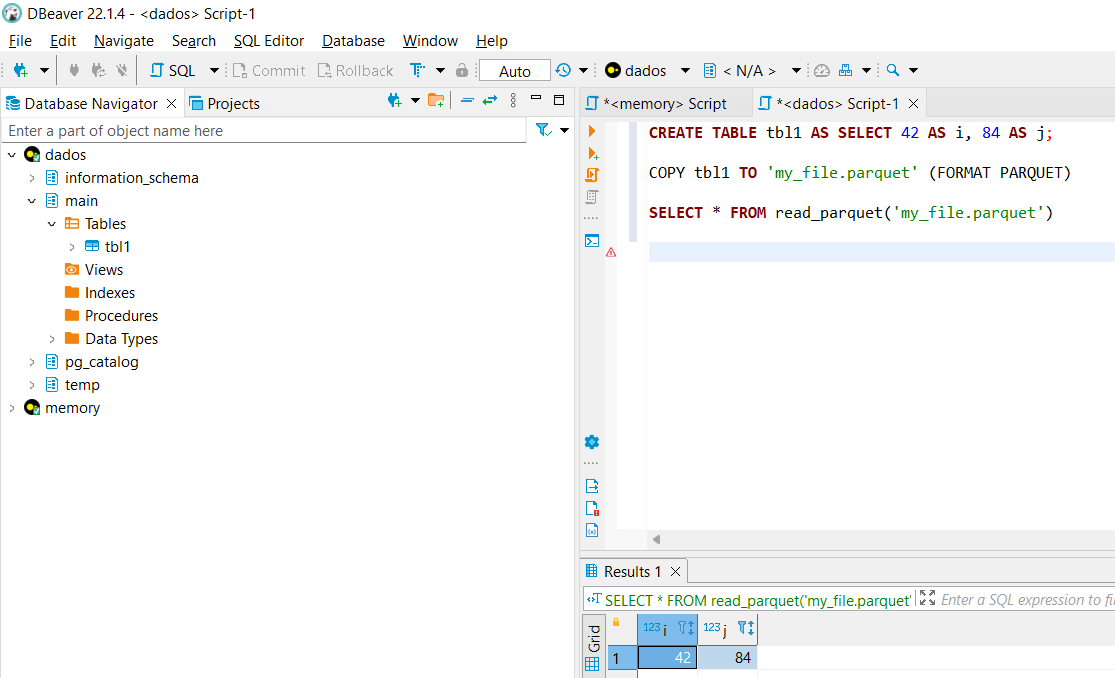

And now the big final moment! Create and write a Parquet file:

Conclusion

In this article, you have had your first contact with DuckDB, learned how to read and write CSVs and Parquet files, summarize your table, and install and use DBeaver Community SQL IDE.

Key takeaways:

- On the initial point, you learned how to install and import DuckDB;

- To help you practice daily, you learn how to read and write CSV and Parquet files;

- Now you also know how to display all tables and summarize them;

- We know how an Integrated Development Environment can help you organize all your work, so we introduce you to the DBeaver SQL IDE.

Finally, I hope you learned from this article; feel free to leave a friendly comment, and see you next time!

If you need additional information, see the documentation at https://duckdb.org/.

<script src=”https://gist.github.com/rafaelaugusto1983/c2fcadcd9c75526e2642df8f38e1d2c5.js“>

Thanks for reading!

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.