This article was published as a part of the Data Science Blogathon.

Introduction

.jpg)

What is a Data Pipeline?

Advantages of Data Pipeline

The main benefits of implementing a well-designed data pipeline include:

When building data processing applications, the data pipeline allows for duplicate patterns – single pipelines can be reused and used in the flow of new data, which increasingly helps to evaluate IT infrastructure. Repeated patterns also incorporate protection from construction from the ground up, allowing for the enforcement of good reusable security operations as the application grows.

Data pipelines help expand shared understanding of how data flows in the system and visibility of tools and techniques used. Data engineers can also set the telemetry of data flow throughout the pipeline, allowing for continuous monitoring of processing performance.

Important Parts of the Data Pipeline

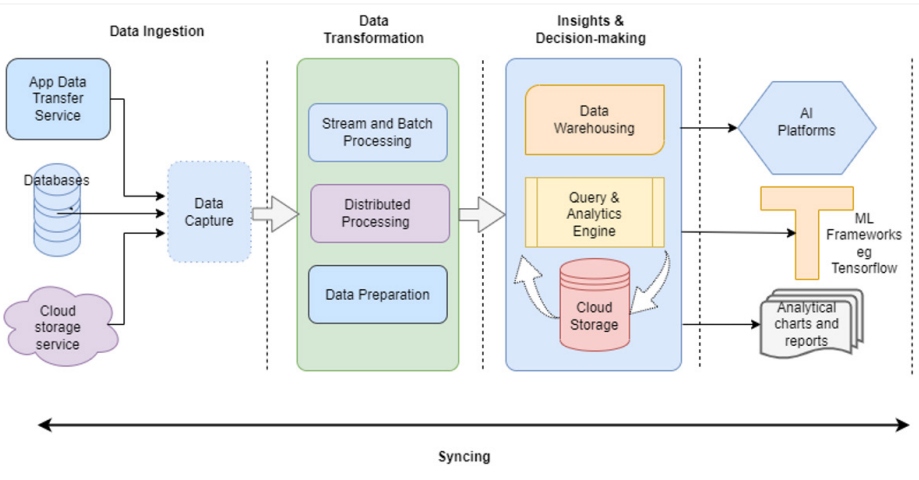

Data Pipeline Processes

Although different operating conditions require different workflows, the following are some common data pipeline procedures:

Data pipeline

Although the complexity of a data pipeline varies based on usage conditions, the amount of data to be extracted, and the frequency of data processing, here are the most common categories of data pipeline:

Export / Import

This category includes the input of data from its source, well known as the source. Data entry points include IoT sensors, data processing applications, online processing applications, social media input forms, social data sets, APIs, etc. Data pipelines enable you to extract information from storage systems, like data pools and storage areas.

Transformation

This section covers the changes made to the data as it moves from one system to another. The data is modified to ensure it matches the format supported by the target system, such as the analytics application.

Processing

This section covers all the functions involved in importing, converting, and uploading data to the output side. Other data processing tasks include merging, sorting, merging, and adding.

Syncing

Data Pipeline Options

Broadcast Processing

Batch Processing

Lambda Processing

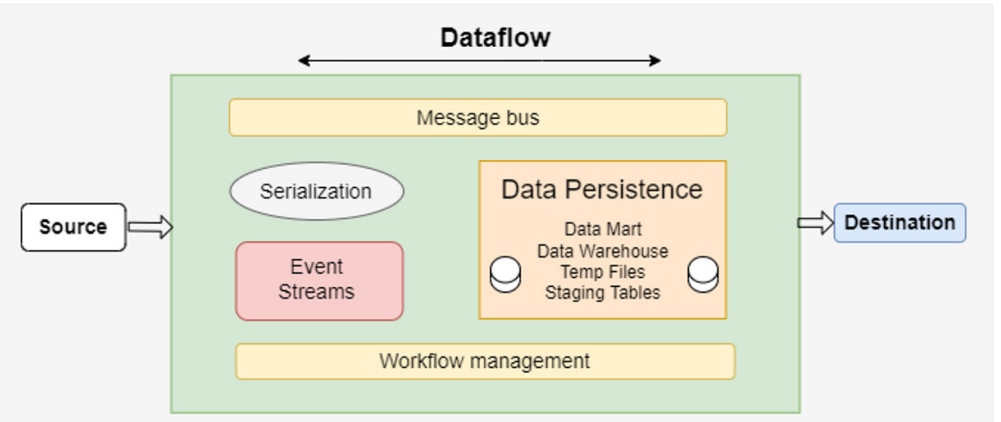

Key Components of Data Pipeline

- Data serialization– Data editing defines common formats that make data easily and accessible and responsible for converting data objects into byte streams.

- Event structures – These structures identify actions and processes that lead to change in the system. Events are included for analysis and processing to assist in app-based decisions and user behavior.

- Workflow management tools – These tools help to organize activities within the pipeline based on directional dependence. These tools also facilitate the automation, monitoring, and management of piping processes.

- Message bus – Message buses are part of an important pipeline, which allows data interchange between systems and ensures the compatibility of different databases.

- Data Persistence – A backup system in which data is noted and read. These systems allow the integration of different data sources by enabling a data access protocol in different data formats.

Best Practices for Using a Pipeline

- Enable the Performance of Similar Tasks

Multiple big data applications are used to carry out multiple data analysis tasks at a time. A modern data pipeline should be built with elastic, big, and shared patterns that can handle multiple data flows at a time. A well-designed pipeline should load and process data from all data flows, which DataOps teams can analyze to use. - Use Extensible Tools for Internal Connection

Modern pipelines are built on a number of frameworks and tools that connect and interact. Inbuilt integration tools should be used to reduce time, labor, and cost to build connections between the various systems in the pipeline. - Invest in Proper Data Arguments

Because inconsistencies often lead to poor data quality, it is recommended that the pipeline use appropriate data dismissal tools to resolve disagreements in different data companies. With clean data, DataOps teams can gather accurate data to make effective decisions. - Enable Data Entry Installation and Identity

It is important to maintain the log of the data source, the business process that owns the database, and the user or process that accesses those databases. This provides complete visibility of data sets to use, which strengthens data quality reliability and authenticity.

Conclusion

In the end, let’s recap what we have learned, from What is

- Data Pipeline to how it is used in industry to get the continuous flow of data,

- Data Pipeline Infrastructure which includes Import/Export, Transformation, scanning, and Processing

- an advantage like It Service Development, Increase Application visibility & Improved production and key Components, we have seen it all in detail.

This is all you need in order to get started working and Building a Data Pipeline.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.