This article was published as a part of the Data Science Blogathon.

Introduction

A data source can be the original site where data is created or where physical information is first digitized. Still, even the most polished data can be used as a source if it is accessed and used by another process. A data source can be a database, a flat file, real-time measurements from physical equipment, scraped online data, or any of the numerous static and streaming data providers available on the internet.

There are a variety of outcomes for which data collectors gather data. However, the primary goal of data collection is to place a researcher in a position to make predictions about future probability and trends.

Source: https://www.pexels.com/photo/black-and-gray-laptop-computer-turned-on-doing-computer-codes-1181271/

Primary and secondary data are the two types of data obtained. The former is gathered by a researcher using first-hand sources, whereas the latter is gathered by someone other than the user.

Based on Nature

Primary Data Sources

The data sources which provide primary data are known as primary data sources, and information gathered directly from first-hand experience is referred to as preliminary data. This is the information you collect for the aim of a particular research endeavour.

Primary data gathering is a straightforward method suited to a company’s particular requirements. It’s a time-consuming procedure, but it provides valuable first-hand knowledge in many business situations.

E.g., in Census data collected by the government, Stock prices are taken from the stock market.

Secondary Data Sources

These data sources provide secondary data. Secondary data has previously been gathered for another reason but is relevant to your investigation. Additionally, the data is collected by someone other than the team who needs the data.

Secondhand information is referred to as secondary data. It is not the first time it has been used, and that’s why it’s referred to as secondary.

Secondary data sources contribute to the interpretation and analysis of main data. They may describe primary materials in-depth and frequently utilize them to promote a certain thesis or point of view.

Identifying and Gathering Data

The first step in identifying data is deciding what information needs to be gathered, which is decided by the aim we want to achieve. After we have identified the data, we will need to choose the sources from which we shall extract the essential information and create a data collecting strategy. At this step, we decide on the duration over which we want the data collection, as well as how much data is required to arrive at a viable analysis.

Data sources can be internal or external to the company, and they can be primary, secondary, or third-party, depending on whether we are getting data directly from the source, accessing it from external data sources, or buying it from data aggregators.

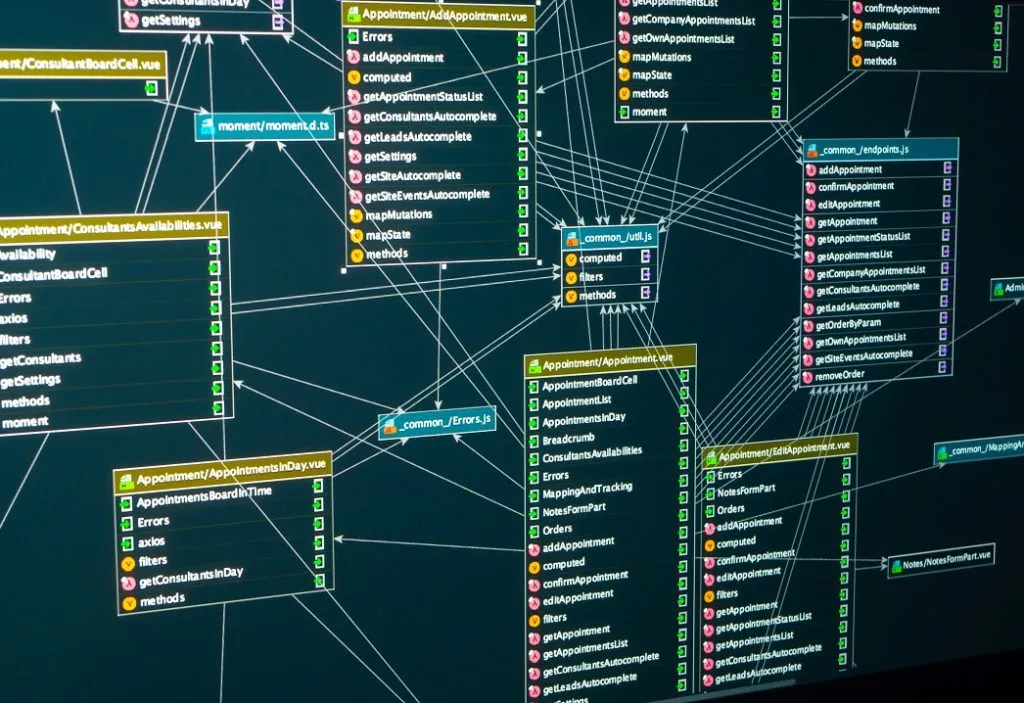

Databases

Databases, the web, social media, interactive platforms, sensor devices, data exchanges, surveys, and observation studies are some of the data sources we may be using. Data from diverse data sources is recognized and acquired, then merged using a range of tools and methodologies to create a unified interface for querying and manipulating data. The data we identify, the source of that data, and the methods we use to collect it all have quality, security, and privacy concerns that must be considered at this time.

Source: https://corporatefinanceinstitute.com/resources/knowledge/data-analysis/database/

Relational databases, such as SQL Server, Oracle, MySQL, and IBM DB2, are used to store data in an organized manner in these systems. Data from databases and data warehouses can be utilized as an analysis source. Data from a retail transaction system, for example, can be used to analyze sales in different regions, while data from a customer relationship management system can be used to forecast sales. There are additional publicly and privately available datasets outside of the organization.

SQL stands for Structured Query Language, and it is a querying language for extracting data from relational databases. SQL provides simple commands for specifying what data should be retrieved from the database, the table from which it should be extracted, grouping records with matching values, dictating the order in which query results should be displayed, and limiting the number of results that can be returned by the query, among a variety of other features and functionalities.

APIs

APIs, or Application Program Interfaces, and Web Services are provided by many data providers and websites, allowing various users or programmes to communicate with and access data for processing or analysis. APIs and Web Services often listen for incoming requests from users or applications, which might be in the form of web requests or network requests, and return data in plain text, XML, HTML, JSON, or media files.

Source: https://www.browserstack.com/blog/diving-into-the-world-of-apis/

APIs (Application Programming Interfaces) are widely used to retrieve data from a number of data sources. APIs are used by apps that demand data and access an end-point that contains the data. Databases, online services, and data markets are examples of end-points. APIs are also used to validate data. An API might be used by a data analyst to validate postal addresses and zip codes, for example.

Web Scraping

Web scraping is a technique for obtaining meaningful data from unstructured sources. Online scraping, also known as screen scraping, web harvesting, and web data extraction, allows you to retrieve particular data from websites depending on predefined parameters.

Source: https://www.toptal.com/python/web-scraping-with-python

Web scrapers may harvest text, contact information, photos, videos, product items, and other information from a website. Web scraping is commonly used for a variety of purposes, including gathering product details from retailers, manufacturers, and eCommerce websites to provide price comparisons, generating sales leads from public data sources, extracting data from posts and authors on various forums and communities, and gathering training and testing datasets for machine learning models. BeautifulSoup, Scrapy, Pandas, and Selenium are some prominent web scraping tools.

Data Streams

Data streams are another popular method for collecting continuous streams of data from sources such as instruments, IoT devices and apps, GPS data from cars, computer programmes, websites, and social media posts. This information is often timestamped and geotagged for geographic identification. Stock and market tickers for financial trading, retail transaction streams for projecting demand and supply chain management, surveillance and video feeds for danger detection, social media feeds for emotion research, and so on are examples of data streams and how they may be utilized.

Data Mining

The heart of the data analysis process is data mining or the process of extracting knowledge from data. It is a multidisciplinary field that employs pattern recognition technologies, statistical analysis, and mathematical methods. Its purpose is to detect patterns and variances in data by identifying correlations. Recognize patterns and forecast probability.

Data mining is used in a variety of sectors and specialities. For example, financial organizations might use data mining models to profile consumer habits, wants, and disposable money in order to deliver customized advertising. They can also use data mining models to watch customer transactions for anomalous behaviours and identify fraudulent transactions. The application of statistical models to forecast a patient’s chance of developing certain health problems and to prioritize treatment options. Data mining simply separates the noise from the true information, allowing firms to concentrate their efforts on exactly what matters.

Data Mining Tools

Spreadsheets

Basic data mining operations are usually performed using spreadsheets such as Microsoft Excel and Google Sheets. Spreadsheets may be used to store data in an easily accessible and readable manner that has been exported from other systems. When you have a large quantity of data to go through and evaluate, pivot tables can help you highlight key features of your data. They also make comparisons between various sets of data a little simpler. Data Mining Client for Excel, XLMiner, and KnowledgeMiner for Excel is Excel add-ins that allow you to do basic mining activities including classification, regression, association rules, clustering, and model development. Google sheets also have a number of add-ons for analysis and mining, including Text Analysis, Text Mining, and Google Analytics.

R

R is a popular data mining tool. R is a free and open-source programming language created by Bell Laboratories (formerly AT&T, now Lucent Technologies). For statistical computing, analytics, and machine learning tasks, data scientists, machine learning engineers, and statisticians choose R. R libraries such as Dplyr, Caret, Ggplot2, Shiny, and data table is used in machine learning and data mining.

Python

Python is a popular programming language that allows programmers to work swiftly and efficiently. Python is highly sought for Machine Learning Algorithms. Many Python feature libraries, such as NumPy, pandas, SciPy, Scikit-Learn, Matplotlib, Tensorflow, and Keras, make machine learning tasks relatively simple to implement.

Python packages for data mining, such as Pandas and NumPy, are widely used. Pandas is a free and open-source data structuring and analysis package. It is perhaps one of the most used Python packages for data analysis. It accepts data in any format and gives an easy platform for organizing, sorting, and manipulating that data. Using Pandas, you can: do fundamental numerical operations such as mean, median, mode, and range; compute statistics and answer questions about data correlation and distribution; visually and quantitatively examine data, and visualize data with the aid of other Python tools.

NumPy is a Python utility for mathematical processing and data preparation. NumPy comes with a slew of built-in data mining methods and features. When working with Python to undertake data mining and statistical analysis, Jupyter Notebooks have become the tool of choice for Data Scientists and Data Analysts.

Statistical Analysis System

SAS Enterprise Miner is the company’s primary data mining product. Its main goal is to simplify the data mining process by providing a diverse range of analytic skills to assist business users with predictive analysis, which leads to better and more efficient decision making. SAS Enterprise Miner scans through massive volumes of data for patterns, partnerships, particular relationships, or trends that might otherwise be missed.

SAS Enterprise Miner is a sophisticated, graphical data mining workstation. It offers extensive interactive data exploration features, allowing users to uncover correlations among data. SAS can manage information from a variety of sources, mine and convert data, and perform statistical analysis. It provides non-technical users with a graphical user interface. SAS allows you to: detect patterns in data using a variety of modelling tools; examine correlations and anomalies in data; analyze huge data, and assess the trustworthiness of data analysis conclusions.

Because of its syntax, SAS is incredibly simple to use and debug. It can handle big datasets and provides its users with a high level of security.

Conclusion

A defined method is required for good data collecting to guarantee that the data you collect is clean, consistent, and dependable. Although data might be beneficial, having too much information is cumbersome, and having the wrong data is pointless. The correct data collecting methods and tools can be the difference between meaningful insights and time-wasting detours.

When it is necessary to arrive at a solution to a question, data gathering methods assist in making assumptions about the outcome in the future. It is critical that we acquire trustworthy data from the appropriate sources in order to simplify computations and analysis.

Read more articles on our website. Click here.

The media shown in this article is not owned by Analytics Vidhya and are used at the Author’s discretion.